Series-Connected Voltage Domains for Highly-efficient Data Center Power Delivery

Josiah McClurg with adviser R. C. N. Pilawa-Podgurski

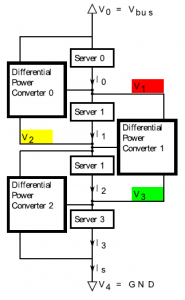

Today’s ac and dc power distribution architectures must process all the power to be delivered to the load through step-down power converters, which have limited practical conversion efficiencies. Because the data center’s power density is expected to increase, the ideal scenario would be to decouple the conversion losses within a data center from the total power delivered to the servers themselves. Figure 23 shows such a proposed power delivery architecture. When the servers are connected electrically in series, the current required by the first server is allowed to flow directly from a high voltage dc distribution bus. Instead of returning immediately to ground, this current is then “recycled” as it passes through each successive server.

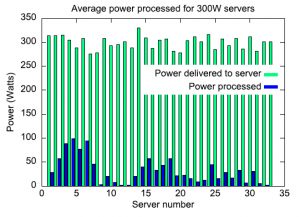

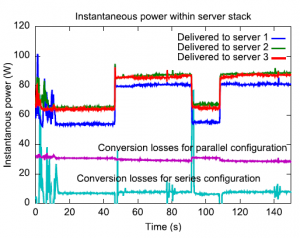

However, if the computational load of the servers is not intelligently managed, individual server input voltages may drift outside their design limits and damage the motherboard. Figure 24 shows how a voltage regulation technique called differential power processing takes advantage of the series-connected architecture to deliver more power than it must process. Figure 25 compares the measured instantaneous power conversion losses of the prototype series-connected server cluster illustrated in Fig. 24 under a web traffic load with the same server cluster configured to operate under a parallel power distribution architecture.

This particular experiment showed more than a 400 per cent reduction in conversion losses. Other experimental results demonstrate that intelligent software scheduling of CPU loads can further reduce power losses in this same prototype series-connected server cluster.

This work is funded through the National Science Foundation Graduate Research Fellowship.